Many mobile app makers are using app store A/B testing tools to enhance their app listing and increase downloads.

While there’s no replacement for a strong App Store Optimization (ASO) strategy, there are some insights mobile app marketers can glean from in-app mobile A/B testing to improve their listing.

Ee’ll outline how you can use mobile A/B testing to inform your ASO strategy and continue to drive growth for your mobile application.

Optimize Your App Store Listing with In-App A/B Testing

1. Test value props and messages on existing users

If you’re wondering whether specific keywords, messages or ads will drive mobile user acquisition, mobile A/B testing can allow you to see how existing users react before you launch to the broader market.

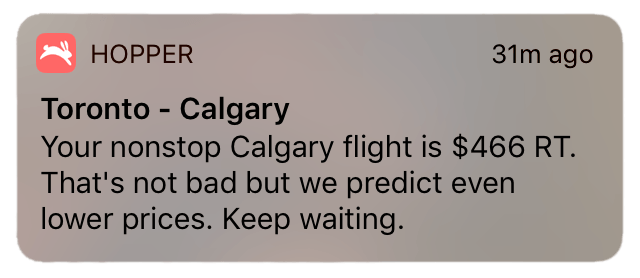

Try taking the message or value prop you want to use for acquisition, and test that message (or a similar message) with users in a test. This experiment can be done either through a push notification, or an in-app inbox message or splash screen.

Whatever result gets better engagement should be the message you launch first. Existing users were once non-users, so understanding what works for them will probably work on other potential users.

Tip: Consider segmenting some of your A/B tests based on how active or long users have been in your app. (Most mobile A/B testing platforms allow you to segment based on user behavior or attributes like this.) This way, you’ll understand what appeals to long-term users—which is the ideal user type you’d like to attract. Either way, you’ll gain insights around what early users or more established users react to, which may inform how you position yourself in app stores and other acquisition channels.

2. Increase social proof for your app

Getting more app ratings and reviews is vital to gaining more visibility for your listing.

The quality of the ratings also matters to users. Research from Apptentive found that going from 3 to 4 stars in the Apple app store can double downloads.

You can use A/B testing to increase the number of positive reviews you collect. Here are some of the elements you’ll want to test to optimize your requests for ratings and reviews in-app:

- When you request a review. Test not only time of day but testing how long after a user signs up, or based on specific actions that indicate they may write a positive review.

- How you ask for a review. A/B test the copy, images or call-to-action to see what drives the most completions.

- Where you ask for reviews. You can base this on the section of the app the user is in or the feature they’re using.

- How you collect reviews or ratings. Test using a prompt before you ask for ratings or the flow that leads users to the request. If you’re using multiple channels to communicate with users (push, email, web, etc.), try testing each channel to see which is the most effective for driving new reviews and ratings.

Using in-app behaviors to trigger review or rating requests is one of the most effective ways to drive positive reviews and ratings. However, you’ll have to make sure the behaviors you choose correlate with user satisfaction. Basing the request off of something like days since download or whether users have completed the app onboarding or not may be too generic to indicate that a user is ready or willing to give a positive rating. Actions that may signal a user is happy enough to positive review your app include:

- Completed a purchase

- Increased their subscription or average order value

- Used a specific feature that indicates a deep investment in the app

- Returned to your app a certain amount of times in a week

- Spent a particular amount of hours browsing content in-app

Before launching a review trigger to all users, test your prompt on a small, random sampling of users to see which users respond best to. You may discover a particular prompt is not well-timed to drive reviews or drives negative ratings. A/B testing can help mitigate this risk.

Tip: Consider digging into your qualitative reviews and user feedback for commonalities and trends that could inform the keywords and messaging direction your take in the app store.

3. Using A/B Test & App Usage Data to Inform Your ASO Strategy

Through A/B testing and app usage data, you can gain insights that may inform your app store optimization strategy.

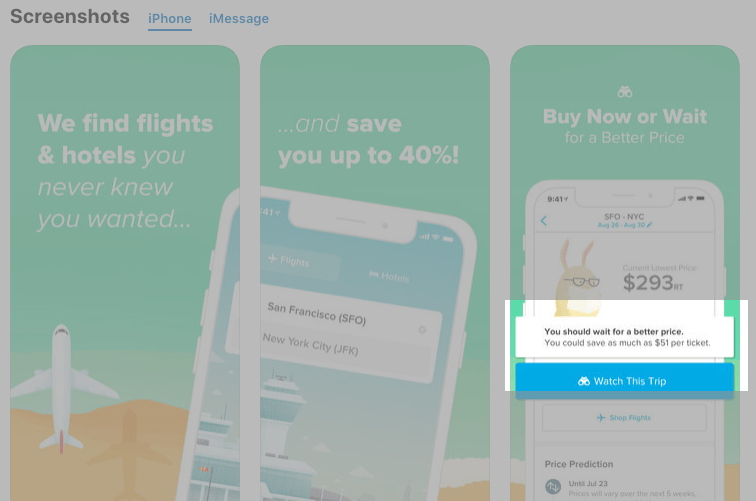

For example, seeing which features gain the most usage can inform the top features you highlight or the screenshots you showcase in your listing. A/B testing these images with a platform like SplitMetrics or Storemaven can help confirm if popular in-app features or content drive first-time user downloads. TheTool helps tracking the conversion rate to download.

You could also test or segment users based on the keyword or channel they discovered your app through. This may help you to see which acquisition strategy seems to drive the most active users, retains the most users over time, or brings in users with the highest average spend—all of which can inform your acquisition spend and strategy.

Another great place to A/B test in your product is your onboarding experience or funnel. Looking at the completion rates of users depending on the value props you presented in your onboarding variations can inform the messaging you use in the app store, and vice-versa. For example, if the language and value prop used in your app store listing is different from the onboarding experience, and sign-up or completion rates are low, then it may be useful to test if making them match impacts user activation or your download rate.

By testing both in-store and in-app you can see if value props in either are driving desired actions—like downloads in the app store, or sign-ups in the app—and see how changing one potentially impacts the other—which brings us to our final point…

How A/B Testing Can Bring Your Mobile Marketing & Product Teams Together To Drive Growth

At the end of the day, your product and marketing teams have similar goals: to grow your user base and increase revenue for your app.

Using A/B testing to optimize your mobile users’ journey—from the app store discovery stage to the post-activation experience—will reduce the time it takes to improve your listing or drive desired user behavior in-app, which will help you acquire and keep more users.

If your product and marketing experimentation teams get into the habit of sharing their learnings with one another, you’ll be better equipped to ask (and find answers to) bigger questions around your app’s value props, top features, and the projects or goals you should be executing on next. Testing data could also inform your product development roadmap or personalization strategy (based on acquisition channel), or your marketing team’s paid social campaigns or multi-channel communications strategy.

Most mobile A/B testing platforms let you test in-app without needing to update your app in the store—which makes running tons of experiments easier and faster. You can also choose one that has a Visual Editor (like Taplytics) so that team members without coding knowledge can set up simple copy or graphics experiments. Having this tool can also reduce the burden on engineering or product management to be solely responsible for in-app testing (although they should own the process overall).

Today we have a guest post from Jillian Wood, who is Content Marketing Manager at Taplytics, a mobile A/B testing company focused on delivering remarkable product and marketing experiences at scale.